Artificial Intelligence: Can it Help in the COVID-19 Crisis? – Part 3

Authors: Krishna Arangode, Anand Srinivasan

This article is the third of a three-part series that attempts to evaluate the capabilities of Artificial Intelligence considering the current pandemic due to the coronavirus.

Covid-19 & Path To Recovery

Economists and market analysts are divided on exactly when the economy is expected to resume normal behavior. While the recent stimulus to boost the economy is likely to help, given the quarantine and social distancing required to mitigate the spread, any recovery is at least 3-4 months away. At the time of publishing, roughly 1/3rd of the United States population is under Shelter-At-Home measures.

With this dramatic impact on day-to-day life, businesses need to think about this from three perspectives:

- In the current state of high uncertainty, how can analytics support decision-making in the near-term?

- When day-to-day life starts to return to normalcy, how should existing analytical solutions be restored as before or would they require any calibration?

- In the distant future, with all the COVID-19 impact mitigated, how should the analytical systems treat the data in this period? Should they be considered a long outlier period and excluded, or should there be any treatment to the data from this period to still use it in future?

Modeling in the Zone of Uncertainty:

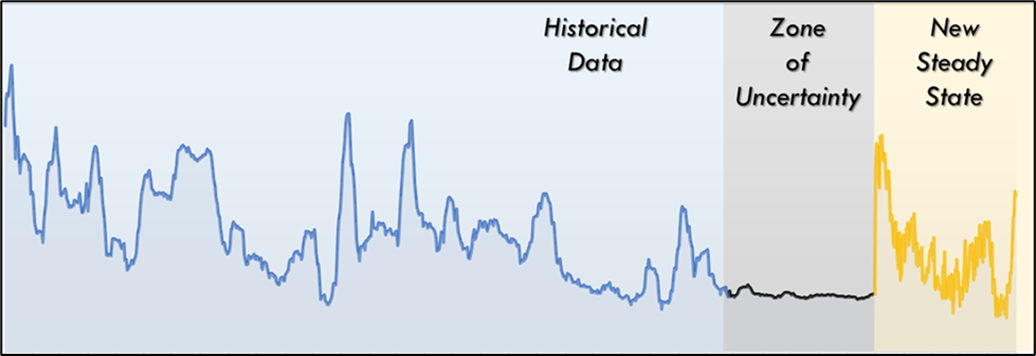

Majority of AI/ML models that are built to support decision-making in a steady state environment rely on long history of data. In these unprecedented times, history is literally not an indicator of current environment. Another potential draw back in steady state models is their inability to pick more recent trends strongly. To address the fast-moving situation we’re in right now, analytical models need to be nimble enough to pick up recent trends emerging from the data and predict based on them for the near term. ML models such as ensemble models can capture both long and short-term trends efficiently in a single model. These models can be calibrated to be more reactive to recent trends. One prudent choice in these uncertain times would be to combine the outputs from these models in a simulation framework to plan against a variety of scenarios. One can then pick the “optimal” decision based on the scenario closest to the events taking place real-time. In the domain of forecasting, there is a well-established framework to pick the best model out of a variety of models which results in most accurate forecasts for a given scenario. Such a framework could be extended to work across multiple KPIs driving the business to pick the simulation scenario that is the closest to the actuals for the business to implement.

Another radical idea to consider is looking back at specific events in the past which could have affected the business dramatically. These could be localized events like hurricanes impacting the Texas/Louisiana markets or global ones like the 2008 global financial crisis, H1N1, SARS, etc. Without trying to extract the direct implication of COVID-19 from these events, a directional indicator of customer and market shifts could be assessed by analyzing the data during these events and right after. Normalizing techniques can help borrow learnings from one or more such events for use in understanding COVID-19 related impact.

However, one key thing to keep in mind on the above techniques is adoption and continuity in business decisions. With uncertainty already abound in the customer behavior, it’s best to avoid uncertainty emanating from the analytical models. Given above-mentioned techniques are more likely to pick up the recent trends, they are also likely to switch the recommended decisions very quickly. In allowing this, users should be mindful of changing the decisions only if the improvement in KPIs is material enough to justify the change. The goal of this would be to provide a change in direction only if there is a significant enough deviation from the previous decision.

Modeling in the New Steady State:

With the global economy so tightly interlinked and the extent of economic devastation from COVID-19, it is an entirely likely scenario that the post COVID world may never be the same as historical data. In such a scenario, it is also important to consider how the models using the larger history would behave. Any analytical models should be considering some aspect of blending the near-term models with long term models to ensure historical patterns are retained while accounting for the new steady state levels. This blending could be in the form of Bayesian statistics which could use the statistical variation in the data to determine how much of historical data vs. new recent data to use for prediction purposes.

Another aspect to consider in the new steady state is the disruption to consumer sentiment and purchase patterns this event would have caused in the “Zone of Uncertainty”. It is important to distinguish purchase from consumption in this period. For example: Most stores are wiped clean of toilet paper. But that doesn’t necessarily indicate an increased consumption. This “stock-pile” of toilet paper is likely to last households for some time to come, thus indicating a lower purchase frequency in the future. While planning manufacturing capacity, it is critical to understand how such anomalies can be accounted for.

Longer term, this period of data needs to be ignored in its entirety or marked as an anomaly and treated appropriately. For businesses that completely shut down during this period, it may be easier to identify this period, while those that are seeing an impact from this, need to evaluate closely what period to treat as an anomaly bearing in mind that the recovery from this time period could be a lot longer than when the economy behaves back as normal. Patterns like the toilet-paper example mentioned above need to be accounted for in the data normalization as well.

Unfortunately, the disruption brought about by COVID-19 was unexpected and would be hard to plan for. However, now that it is a reality, businesses are forced to plan for it and recovering from it using all possible tools at their disposal. Historically, watershed events like COVID-19 serve as catalysts to accelerate the deep underlying trends that might be dormant in the data but may not have been apparent statistically. Such events have also spurred the highest levels of innovation in the business establishing entire new ways of going about Businesses would be better off going with the saying “Prepare for the worst and hope for the best”. The strategies and approaches mentioned above are only some examples of AI tools and techniques that could be leveraged in doing so. At Kaizen Analytix, we deliver AI-driven solutions that augments our clients’ decision making process provide incremental business value. Please reach out to us if you need help or guidance in navigating the current COVID-19 crisis.

More Publications

-

Automotive Innovation Series, Part 4: Harnessing Unsupervised Machine Learning in the Automotive Sector

-

The Future of Payment Infrastructure: Overcoming Challenges & Embracing Innovation

-

The Current State of the Financial Services Industry: Key Challenges & Priorities for the Future

-

The Current State of Credit Unions: Challenges, Trends, and Solutions for Sustainable Growth